Akamai Bot Manager: How It Works and How ScrapeBadger Bypasses It

Akamai is one of the world's largest CDNs and edge security platforms — more than 50% of Fortune 500 companies use Akamai services. Bot Manager is its dedicated bot detection layer, processing an average of 40 billion bot requests per day with access to threat intelligence from across one of the largest traffic networks on the internet.

When your scraper hits a site protected by Akamai Bot Manager, it doesn't usually fail visibly. There's no CAPTCHA page. There's no 403 Forbidden. There's often a clean 200 OK response — with empty data fields, a redirected page, or a completely different HTML document than the one a real browser receives. Akamai doesn't necessarily tell you you're blocked. It just serves you something useless.

This is what makes Akamai the hardest anti-bot system to debug. The failure is silent. And unlike Cloudflare — where a challenge page is at least recognisable — Akamai's most sophisticated deployment quietly degrades your data until someone notices the pipeline is collecting garbage.

This article covers exactly how Akamai Bot Manager works, why every standard bypass approach eventually fails, and how ScrapeBadger's Akamai bypass infrastructure solves all of this without configuration.

Who Uses Akamai and Why It Matters for Scraping

Akamai isn't evenly distributed across the web. Approximately 30% of Fortune 500 sites use some form of Akamai protection, common in retail, airlines, and luxury fashion. More specifically, the sites where Akamai appears most frequently are exactly the sites that generate the highest-value scraping use cases:

Retail and e-commerce — major fashion brands (Net-A-Porter, Farfetch, luxury labels), large retailers, and marketplace platforms with high-velocity price changes and inventory data.

Airlines and travel — most major international carriers deploy Akamai on their booking and fare pages. If you're building a flight price monitor or travel intelligence tool, you're almost certainly dealing with Akamai.

Financial services — banks, trading platforms, financial data portals. Any site where the data is commercially sensitive enough to justify serious infrastructure investment.

Consumer brands with loyalty programs — retailer loyalty portals, ticketing platforms, and consumer brands protecting inventory from scalper bots.

The commercial significance of Akamai-protected targets is why bypassing it correctly matters so much. Getting blocked on a Cloudflare-protected blog is annoying. Getting blocked on an airline fare page or luxury retail catalogue means your competitive intelligence pipeline is empty.

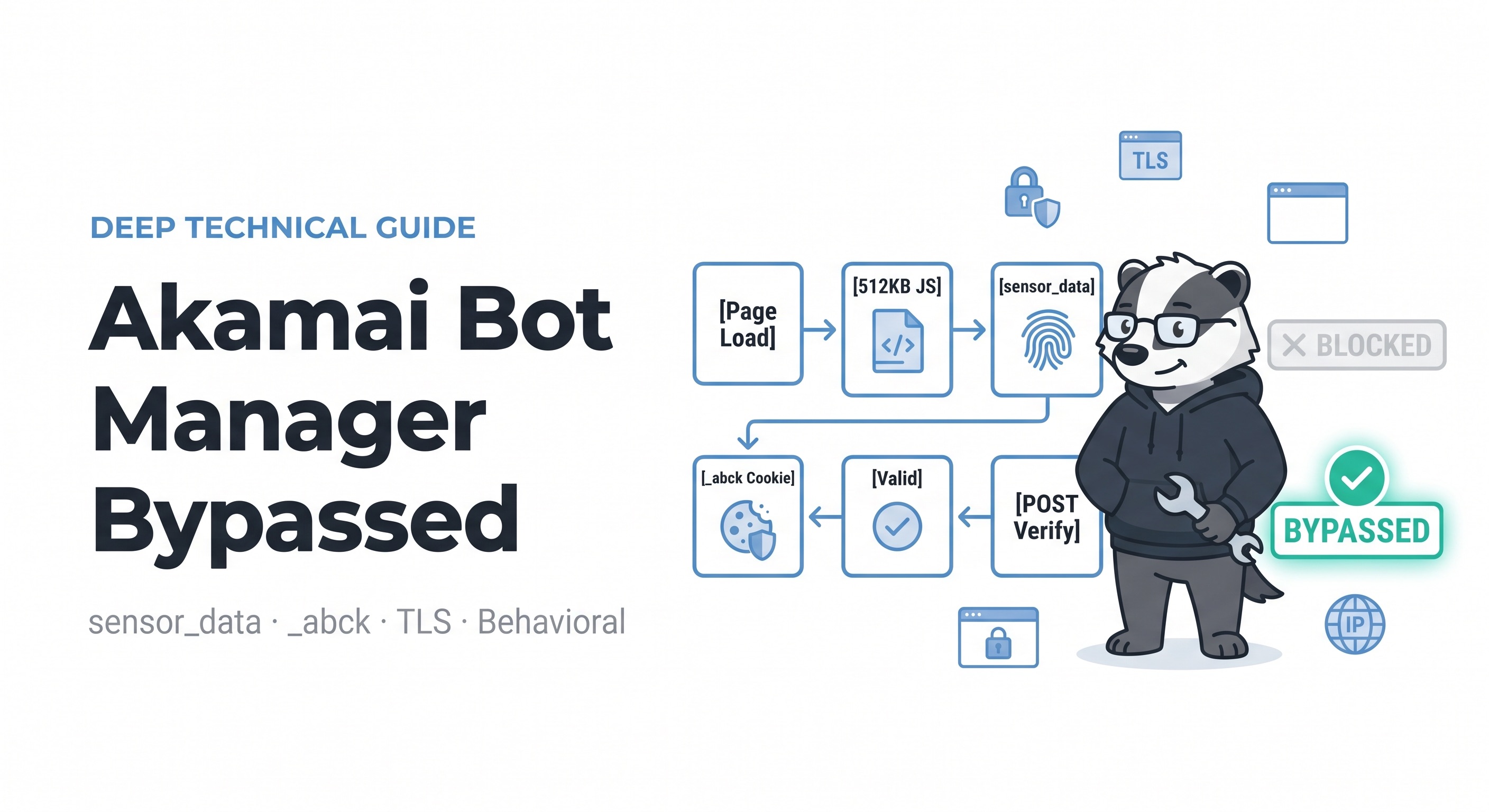

The Akamai Detection Pipeline: What's Actually Happening

Understanding Akamai requires understanding that it's not a single check — it's a pipeline where each stage generates a score, and the scores combine into a final bot confidence value. Akamai applies all detection layers and combines their results into a single bot score from 0 to 100. A standard HTTP client scores near 100 — maximum bot confidence — and is blocked immediately. Use Apify

The complete pipeline runs like this:

Page Load

↓

Akamai Script Loads (512KB obfuscated JavaScript)

↓

Signal Collection (100+ browser, device & behavioral signals)

↓ canvas hash, WebGL GPU, timing,

mouse events, navigator props,

TLS fingerprint, IP reputation

↓

sensor_data = (encrypted payload generated from signals)

↓

POST → /_sec/cp_challenge/verify

(payload sent to Akamai's validation endpoint)

↓

Server validates sensor_data against TLS fingerprint

↓

_abck cookie set (valid session token)

ak_bmsc, bm_sv set (secondary session cookies)

403 Block ← if ANY signal fails validation

200 OK ← if ALL signals passEvery stage of this pipeline is a potential failure point. Miss any one of them and the _abck cookie is either not issued or issued with a -1 validity flag (the ~-1~ segment versus ~0~ in the cookie value — the tell that separates a real clearance from a fake one).

The Six Detection Layers

Layer 1: TLS Fingerprinting (JA3/JA4)

In 2026, TLS fingerprinting is Akamai's most effective single detection mechanism. Every HTTPS connection produces a JA3 hash from the TLS ClientHello — cipher suite order, extensions, and elliptic curves. Akamai maintains a database of real browser TLS profiles and flags requests whose JA3 hash matches no known browser. JA4 (the newer standard) adds additional fingerprint vectors.

This is why any Python requests-based approach fails immediately against Akamai, even with perfect headers. The requests library has a known JA3 fingerprint — 7e262e4d3a3f90eb9de01e5acef8a6ce — that Akamai has in its blocklist. The rejection happens at the TLS handshake level before any HTTP headers are even evaluated. Your headers don't matter if your ClientHello is wrong.

Akamai also inspects HTTP/2 SETTINGS frames. A JA4 hash that doesn't match a known browser profile triggers the bmak script path immediately.

Layer 2: IP Reputation

Datacenter proxies are easily detected by Akamai's IP reputation system. Even with residential proxies, inconsistent TLS fingerprints, missing cookies, or abnormal request patterns can trigger blocks.

Akamai's network processes 40 billion requests daily across thousands of Akamai-protected sites globally. This means an IP that scraped one Akamai customer's site aggressively yesterday is already flagged when it hits a completely different Akamai customer today. The threat intelligence is shared across the entire network — which is what makes Akamai's IP reputation database qualitatively different from Cloudflare's.

Datacenter IPs from AWS, GCP, and major hosting providers are pre-flagged. Residential IPs are necessary but not sufficient — they still need to arrive with matching TLS profiles, correct session history, and coherent behavioral signals.

Layer 3: The sensor_data Payload (The Core Challenge)

What makes Akamai uniquely difficult to bypass is its 512KB of heavily obfuscated JavaScript loaded on every protected page. This script collects over 100 browser, device, and behavioral signals — then encrypts and POSTs them as a sensor_data payload to Akamai's validation endpoint. A valid response sets the _abck cookie — Akamai's primary session clearance token. Without it, every subsequent request is blocked or challenged.

The signal categories collected in the sensor_data payload include:

Browser environment signals: navigator.userAgent, navigator.language, navigator.platform, navigator.hardwareConcurrency, navigator.deviceMemory, navigator.plugins (count and names), navigator.permissions state, window.screen dimensions and color depth.

Canvas fingerprint: The script renders specific geometric shapes and text to a hidden canvas element and hashes the pixel output. Every real GPU/driver combination produces a slightly different rendering. Scrapium patches all of them in Chromium C++, not via JavaScript hooks that toString() inspection exposes.

WebGL renderer and vendor: gl.getParameter(gl.RENDERER) exposes GPU information. In real Chrome this returns something like ANGLE (Intel, Intel(R) UHD Graphics 620 Direct3D11 vs_5_0 ps_5_0, D3D11). In software-rendered headless Chrome it returns Google SwiftShader. Akamai knows this.

AudioContext fingerprint: The AudioContext API processes a short audio buffer and the output varies by hardware. Consistent across sessions from the same device; identifiable as automated when it matches no real hardware profile.

Timing and behavioral telemetry: Mouse movement trajectories, scroll events, keystroke timing, time between page load and first interaction. Humans produce stochastic timing; bots produce either completely uniform timing or obviously fake randomisation.

Font enumeration: What fonts are installed on the device. Real Windows, Mac, and Linux machines have predictable font sets. The absence of any installed fonts, or a font list that doesn't match the declared OS, is a detection signal.

Layer 4: The _abck Cookie Lifecycle

The standard cookies used by Akamai are _abck, ak_bmsc, bm_sv, and bm_mi. The _abck cookie is the primary session clearance token returned when the sensor_data payload is validated. ak_bmsc is HTTP-only — you can't access its content from JavaScript, only from response headers.

The _abck cookie contains a structured value where the ~0~ vs ~-1~ segment reveals validity. A -1 value means the sensor_data was rejected — Akamai accepted the POST but determined the payload was invalid. This is the silent failure mode: you get a cookie, you think you're authenticated, but every subsequent data request returns either empty content or a challenge page.

_abck has a time limit. After being intercepted by a 403 error, the cookie needs to be regenerated. Additionally, Akamai will quietly upgrade sessions from sensor_data only to the pixel round-trip if scores look suspicious — requiring a second challenge type to be solved before clearance is granted.

The cookie expiry cycle varies by target site and Akamai configuration — typically 30 minutes to 2 hours on aggressive deployments. Any production pipeline needs expiry detection and automatic re-generation built in.

Layer 5: Behavioral Analysis

Akamai monitors user interaction patterns to detect automated behavior. Unlike humans, bots exhibit consistent interaction patterns such as identical scrolling behaviors, perfectly timed clicks, and predictable mouse movements. ScrapingBee

This is the layer that breaks sophisticated headless browser setups that have solved every other challenge. A Playwright session with perfect TLS fingerprinting, genuine hardware-matched canvas output, and valid _abck cookies still fails if the session-level navigation pattern doesn't look human.

Akamai's behavioral model is trained on billions of real sessions across its customer network. It looks for:

Mouse movement trajectories that follow physically impossible curves (or perfectly smooth artificial ones)

Scroll velocity distributions that don't match human reading patterns

Time-on-page distributions that are suspiciously uniform across requests

Navigation patterns that jump directly to high-value pages without browsing context

Request timing that's consistent to the millisecond across many sessions from the same IP

Layer 6: The Obfuscation Layer (The Arms Race Engine)

The script URL is per-tenant and the obfuscation seed rotates. Akamai rotates the cookie on a tight cadence. The obfuscation seed changes, the string arrays shuffle, and the timing trap positions move — any deobfuscated Python/Node implementation needs re-generation on every rotation. Scrapfly

This is the engineering cost that makes DIY bypass economically unviable at production scale — the work never ends. Unlike Cloudflare's challenge pages (which have relatively stable structure between major updates), Akamai's obfuscation engine is designed to require continuous reverse-engineering effort to maintain.

Why DIY Akamai Bypass Always Fails Eventually

There are two DIY approaches developers try. Both have the same fundamental problem.

Approach 1: Sensor Data Generator

If you go on any scraping forum and ask how to beat Akamai, you will get pointed at four or five private sensor_data generators. They all do the same thing: take the obfuscated bot script, deobfuscate it, port the VM to Python or Node, feed it fake mouse events and key timings, post the resulting payload to the sensor endpoint, and pray the _abck you get back is valid.

This works. At first. The engineering cost to get it working:

A developer who can read Akamai's obfuscation pipeline — not a junior role

A test harness that compares generated payloads byte-for-byte against real browser dumps

A CI pipeline that re-fetches the bot script hourly (it's per-tenant and the obfuscation seed rotates)

A pixel/challenge solver for when Akamai silently upgrades you

And then it breaks. The arms race is real — detection scripts evolve constantly. Any bypass is temporary. TLS fingerprinting is the hardest barrier — server-side TLS validation is harder to bypass than any client-side JavaScript check.

Approach 2: Headless Browser with Stealth Patches

Use Playwright or Puppeteer with stealth patches to pass the JavaScript fingerprinting layer. This gets you further than raw HTTP requests, but hits the TLS layer.

It is not wise to use Python/Node.js/Go to write code that assembles sensor_data and POSTs it directly — Akamai detects TLS fingerprints at the wire level, independent of what the JavaScript layer does.

Even Playwright with perfect stealth patches fails because:

Playwright's default Chromium build exposes a headless indicator in its TLS stack

Patchwork JavaScript overrides are detectable via

toString()inspectionSession-level behavioral signals accumulate over time and degrade the bot score even with good initial fingerprints

The real issue with both approaches: they're fighting a continuously updating adversary with static tooling. Every Akamai tenant update invalidates your work. Every obfuscation rotation breaks your generator. Every behavioral model update makes your fake mouse events more detectable.

How ScrapeBadger Solves the Akamai Problem

ScrapeBadger's auto-escalation engine executes Akamai's 512KB obfuscated sensor data JavaScript in a genuine browser environment, obtains a valid _abck cookie, and handles TLS fingerprinting and behavioral detection — all without configuration.

The key word is genuine. Rather than attempting to reverse-engineer what Akamai's script does and replicate it in Python or Node.js, ScrapeBadger runs the actual script in an actual browser — a patched Chromium instance with authentic hardware-matched fingerprints at the C++ level, not via JavaScript hook overrides.

This matters for one fundamental reason: Canvas, WebGL, AudioContext, and Navigator properties are patched at the C++ level — not via JavaScript overrides that toString() inspection can expose.

The difference is significant. A JavaScript-level patch looks like this:

javascript

// JavaScript hook — detectable via toString() inspection

Object.defineProperty(HTMLCanvasElement.prototype, 'toDataURL', {

value: function() { return spoofed_canvas_output; }

});

// Akamai's script can call: HTMLCanvasElement.prototype.toDataURL.toString()

// and detect the native code substitutionA C++-level patch is invisible at the JavaScript layer because it modifies the actual rendering engine behaviour — the same mechanism real hardware variation produces. Akamai's detection script calls the canvas API and gets an output that's genuinely consistent with the declared hardware profile, because it is.

The auto-escalation approach

ScrapeBadger's auto-escalation engine executes Akamai's 512KB obfuscated sensor data JavaScript in a genuine browser environment, obtains a valid _abck cookie, and handles TLS fingerprinting and behavioral detection — all without configuration.

"Auto-escalation" means the system automatically determines what level of challenge Akamai is presenting and handles it:

Standard sensor_data flow — browser executes the script, generates valid payload, receives

_abckPixel challenge escalation — when Akamai silently upgrades from sensor_data to a pixel round-trip, ScrapeBadger handles this automatically

Session re-establishment — when

_abckexpires or is invalidated, the pipeline regenerates without interrupting your data collection

Zero failed-request charges. You pay only for successful results. If ScrapeBadger fails to bypass Akamai for any reason, the request is not billed. This is the pricing model that only works if your success rate is genuinely high.

Using ScrapeBadger to Scrape Akamai-Protected Sites

The integration is identical to any ScrapeBadger request. The bypass happens transparently:

python

import requests

API_KEY = "your_scrapebadger_key"

def scrape_akamai_site(url: str) -> dict:

"""

Scrape any Akamai-protected URL.

ScrapeBadger handles sensor_data, _abck, TLS, and behavioral

detection automatically — no configuration required.

"""

response = requests.get(

"https://api.scrapebadger.com/v1/scrape",

headers={"X-API-Key": API_KEY},

params={

"url": url,

"render_js": True, # Required for Akamai (JS-heavy sites)

"wait_for": "networkidle", # Wait for full JS execution

},

timeout=30

)

return response.json()

# Scrape a luxury retail product page (Akamai-protected)

result = scrape_akamai_site("https://www.net-a-porter.com/en-gb/shop/product/123456")

print(result["html"][:1000])For pipelines that need to scrape many pages from the same Akamai-protected domain, session reuse is handled automatically — ScrapeBadger maintains a valid _abck session across multiple requests to the same target rather than re-executing the sensor_data challenge on every call.

Bulk scraping Akamai-protected catalogues

python

import requests

import time

from typing import Optional

API_KEY = "your_scrapebadger_key"

def bulk_scrape(

urls: list[str],

delay_seconds: float = 1.5,

max_retries: int = 2,

) -> list[dict]:

"""

Scrape multiple pages from an Akamai-protected site.

Session management and _abck rotation handled automatically.

"""

results = []

for i, url in enumerate(urls):

print(f"[{i+1}/{len(urls)}] Scraping: {url[:60]}...")

for attempt in range(max_retries):

try:

response = requests.get(

"https://api.scrapebadger.com/v1/scrape",

headers={"X-API-Key": API_KEY},

params={

"url": url,

"render_js": True,

"wait_for": "networkidle",

},

timeout=30

)

data = response.json()

results.append({

"url": url,

"status": "ok",

"html": data.get("html", ""),

})

break # Success — move to next URL

except requests.exceptions.Timeout:

if attempt == max_retries - 1:

results.append({"url": url, "status": "timeout"})

else:

time.sleep(3)

continue

time.sleep(delay_seconds)

return results

# Example: scrape product pages from an airline (Akamai-protected)

flight_pages = [

"https://www.britishairways.com/en-gb/destinations/new-york",

"https://www.britishairways.com/en-gb/information/baggage-essentials",

"https://www.britishairways.com/en-gb/offers/holidays",

]

scraped = bulk_scrape(flight_pages)

successful = [r for r in scraped if r["status"] == "ok"]

print(f"Successfully scraped {len(successful)}/{len(flight_pages)} pages")Sites Commonly Protected by Akamai

These are the categories and platforms where you'll encounter Akamai Bot Manager most frequently — and where ScrapeBadger's bypass infrastructure delivers the most value:

Luxury fashion retail — Net-A-Porter, Farfetch, Matches Fashion, major luxury brand e-commerce sites. High-value inventory data and pricing used for competitive intelligence and resale market monitoring.

Airlines — British Airways, Lufthansa, Japan Airlines, many major carriers. Fare data for price tracking, competitive analysis, and travel intelligence tools. As covered in the ScrapeBadger Google Flights API guide, Google Flights itself aggregates from these airline sites — making Akamai bypass a core infrastructure requirement for travel data pipelines.

Financial services — broker platforms, banking sites, financial data portals. Rate data, product information, and market intelligence.

Consumer electronics retail — Best Buy, major electronics retailers. Stock monitoring, price tracking, product availability. The e-commerce scraping guide on the ScrapeBadger blog covers the full pipeline architecture for these use cases.

Sports and ticketing — live event ticketing platforms, sports merchandise retailers, loyalty programme portals.

Automotive — dealer inventory platforms, manufacturer configurators, used car marketplaces with real-time inventory.

If the site's cookies section shows _abck, ak_bmsc, or bm_sz, you're dealing with Akamai. The presence of these cookies in DevTools → Application → Cookies is the fastest way to identify Akamai-protected targets before writing any scraping code.

Akamai vs. Other Anti-Bot Systems

Developers who've worked with multiple anti-bot systems often ask how Akamai compares to Cloudflare, DataDome, and PerimeterX. The comparison matters because the bypass approach differs significantly.

Akamai integrates with its broader CDN ecosystem, using fingerprinting, behavioral analysis, and CAPTCHA challenges to block bots adaptively. Kasada focuses on proactive prevention, using AI, cryptographic challenges, and JavaScript obfuscation to stop bots early. ScrapingBee

Cloudflare is the most widely deployed but generally the most bypassable with correct TLS fingerprinting and residential proxies. As detailed in the ScrapeBadger Cloudflare bypass guide, standard deployments fall to curl_cffi with the right profile; only Enterprise Bot Management requires the full browser execution approach.

Akamai is harder than standard Cloudflare because the sensor_data pipeline runs deeper into the browser environment, the obfuscation is actively maintained, and the threat intelligence network spans a larger sample of traffic than any other provider. It requires genuine browser execution for reliable bypass.

DataDome uses real-time ML models that analyse session behaviour and respond within milliseconds. Its ScrapeBadger DataDome bypass requires the same genuine browser approach as Akamai but with different session management patterns.

Imperva (Incapsula) is Akamai's closest technical peer in difficulty — similar JavaScript challenge structure with its own obfuscated reese84 challenge payload. The ScrapeBadger Imperva bypass handles both.

PerimeterX uses biometric behavioural analysis with its _px cookie family. The ScrapeBadger PerimeterX bypass handles px cookie validation and the px3 challenge.

ScrapeBadger's bypass infrastructure covers all major anti-bot systems under the same API endpoint. You don't configure which system your target uses — the engine detects it and applies the correct approach automatically. The full documentation at docs.scrapebadger.com covers all supported anti-bot systems.

The True Cost of DIY Akamai Bypass at Production Scale

The ScrapeBadger web scraping cost guide puts concrete numbers on this for general scraping infrastructure. For Akamai specifically, the numbers are more extreme.

The engineering requirements for a production DIY sensor_data generator include: a developer who can read Akamai's obfuscation pipeline (not a junior role); a test harness that compares generated payloads byte-for-byte against real browser dumps; a CI pipeline that re-fetches the bot script on every target hourly because the script URL is per-tenant and the obfuscation seed rotates; and a pixel/challenge solver for when Akamai silently upgrades sessions. Scrapfly

At a realistic senior developer rate of $100/hour, the initial build costs 4–8 weeks minimum — $16,000–32,000. Ongoing maintenance against Akamai's continuous obfuscation rotations is 10–20 hours per month per protected target — $1,000–2,000/month/target.

For teams scraping 5 different Akamai-protected sites, that's $5,000–10,000/month in pure maintenance labour. That's before proxy costs, server infrastructure, monitoring, or the cost of the inevitable failures when a rotation breaks things silently and bad data flows into production for days before anyone notices.

ScrapeBadger's model — pay per successful request, zero charges for failures, no configuration, covered by the same infrastructure that handles Cloudflare, DataDome, Imperva, and PerimeterX — eliminates this entire cost category.

Getting Started

ScrapeBadger's Akamai bypass is available on all plans with 1,000 free credits to test against your specific Akamai-protected targets. No credit card required. The free tier is enough to validate bypass success on your actual URLs before committing to production scale.

bash

# Test Akamai bypass with cURL

curl "https://api.scrapebadger.com/v1/scrape\

?url=https://akamai-protected-site.com/products&render_js=true" \

-H "X-API-Key: YOUR_API_KEY"If the response contains the product data you're looking for, the bypass is working. If you're seeing empty fields or challenge page content, check that render_js=true is set — Akamai's sensor_data challenge requires JavaScript execution and cannot be bypassed with a raw HTTP request.

Full technical documentation for all bypass parameters at docs.scrapebadger.com. The ScrapeBadger Cloudflare bypass guide covers the equivalent technical detail for Cloudflare-protected targets if you're working across both systems.

Written by

Thomas Shultz

Thomas Shultz is the Head of Data at ScrapeBadger, working on public web data, scraping infrastructure, and data reliability. He writes about real-world scraping, data pipelines, and turning unstructured web data into usable signals.

Ready to get started?

Join thousands of developers using ScrapeBadger for their data needs.