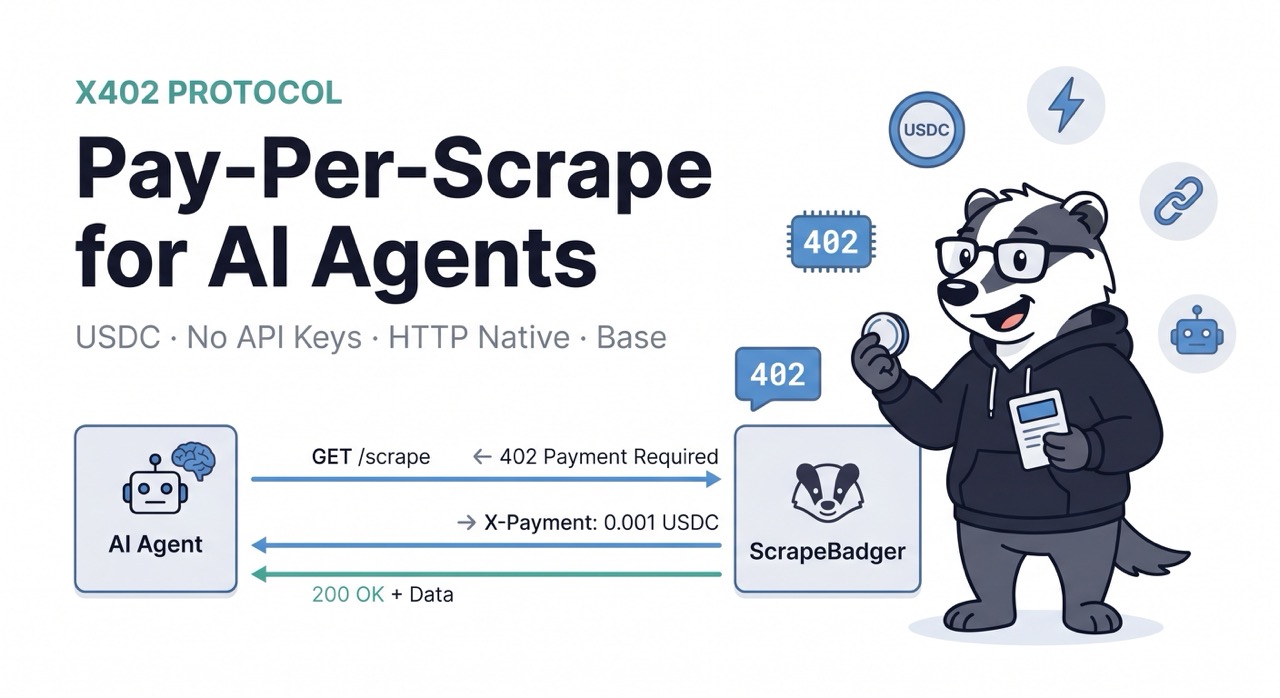

x402 + ScrapeBadger: Pay-Per-Scrape for AI Agents Without API Keys

HTTP status code 402 has been in the spec since 1991. For 34 years it sat unused — officially reserved for "Payment Required," with a parenthetical note that it was "reserved for future use." The future arrived in May 2025.

Coinbase launched x402 in May 2025 with a simple premise: kill the API key, enable economic reasoning for LLMs, and close the earn/spend loop on the agentic economy. Since then, it has processed millions of payments. The x402 Foundation, co-founded by Coinbase and Cloudflare, now includes Google and Visa.

By March 2026, total transactions across all chains exceed 119 million on Base alone, daily on-chain volume sits around $28,000, and the protocol is handling roughly $600 million in annualized payment volume across the ecosystem.

This is not experimental infrastructure. This is a payment protocol that processes more transactions per day than many established fintech products, backed by Coinbase, Cloudflare, Google, and Visa, with a regulatory framework (the GENIUS Act, signed July 2025) and a stablecoin market cap above $230 billion behind it.

For web scraping APIs, x402 represents the most natural fit in the entire ecosystem. ScrapeBadger is building toward x402 integration — and this article explains exactly why, what it looks like technically, and why AI agents running on x402 rails will want ScrapeBadger as their data infrastructure layer.

What x402 Actually Is

x402 revives the long-dormant HTTP 402 "Payment Required" status code to turn it into a native payment step that allows applications, APIs, and AI agents to send and receive instant, autonomous stablecoin payments such as USDC and USDT directly over HTTP. This removes the need for subscription walls, redirects, and custom integrations, making payments feel like a seamless extension of a standard web request. Zyte

The protocol flow is elegant in its simplicity:

1. Client (agent or app) sends standard HTTP request to a paid resource

GET /v1/scrape?url=https://example.com HTTP/1.1

2. Server responds with HTTP 402 + payment instructions

HTTP/1.1 402 Payment Required

X-Payment-Required: {"amount": "0.001", "currency": "USDC",

"network": "base", "address": "0x..."}

3. Client signs a USDC micropayment authorization and retries

GET /v1/scrape?url=https://example.com HTTP/1.1

X-Payment: {"signature": "0x...", "amount": "0.001",

"network": "base"}

4. Server verifies payment on-chain and returns the resource

HTTP/1.1 200 OK

{"html": "...", "status": "success"}When any agent receives an HTTP 402 response from a paid API, it autonomously signs and broadcasts a USDC micropayment on Base, retrieves the payment token, and retries the call without human intervention. Bright Data

No account creation. No API key management. No invoices. No subscription billing. No human approving a credit card charge. No signups, no API keys, no invoices to manage — instead, it enables low-fee, automated payments using stablecoins on fast blockchains like Base and Solana. Zyte

Why Scraping APIs Are the Perfect x402 Use Case

Web scraping is structurally one of the best fits for x402 in the entire API economy. Here's why.

Consumption is fundamentally per-request. When you buy a scraping API subscription, you're buying a block of credits or a monthly request allowance — then hoping your actual usage matches what you bought. Under-buy and you hit limits mid-pipeline. Over-buy and you pay for idle credits. x402 eliminates this entirely: you pay for exactly what you use, in the moment you use it, with no pre-funding required.

AI agents consume scraping APIs at unpredictable volumes. A research agent might make 3 scraping calls on Monday and 3,000 on Friday. A market intelligence agent might be dormant for a week and then run 50,000 calls during a breaking news cycle. Traditional subscription models are poorly suited to this. These agents need to pay for things, and they cannot fill out credit card forms or wait for invoice approvals. They need a payment mechanism that is as programmable and instant as the HTTP calls they are already making. ScrapingBee

The data is public, the access is paid. The web data ScrapeBadger retrieves — product prices, search results, Maps reviews, flight fares, news articles — is publicly visible to any browser. What you're paying for is the infrastructure: proxy rotation, anti-bot bypass, JavaScript rendering, structured JSON output. This is exactly the kind of service that x402 was designed to monetise: the infrastructure layer that makes public resources accessible at scale.

No account = no friction for autonomous workflows. The biggest practical barrier to AI agents consuming more web data isn't cost — it's the human-in-the-loop billing setup. Before an agent can call ScrapeBadger today, a human has to sign up, enter a credit card, receive an API key, store it in environment variables, and manage credit top-ups. Under x402, an agent with a funded wallet can start making scraping calls immediately — no human involved at any stage.

The ScrapeBadger x402 Integration

ScrapeBadger is building toward native x402 support — making every scraping endpoint accessible with per-request USDC micropayments on Base, with no API key required for x402-enabled clients.

The vision is straightforward: an AI agent that needs live web data sends a request to ScrapeBadger's endpoint. If the agent doesn't have a traditional API key, it receives an HTTP 402 response with payment instructions. The agent pays the per-request fee in USDC, retries the request with the payment proof, and receives the scraped data. The entire interaction takes under a second.

Here's what that looks like in a Python agent implementation:

python

import httpx

import json

from coinbase_agentkit import CoinbaseWallet # or any x402-compatible wallet

class X402ScrapeBadgerClient:

"""

ScrapeBadger client with native x402 payment support.

No API key required — pays per request in USDC on Base.

"""

def __init__(self, wallet: CoinbaseWallet):

self.wallet = wallet

self.base_url = "https://api.scrapebadger.com"

async def scrape(self, url: str, render_js: bool = True) -> dict:

"""

Scrape a URL with automatic x402 payment handling.

If the server returns 402, the agent pays and retries automatically.

"""

async with httpx.AsyncClient() as client:

# First attempt — no payment header

response = await client.get(

f"{self.base_url}/v1/scrape",

params={"url": url, "render_js": render_js},

)

# Handle x402 payment flow

if response.status_code == 402:

payment_details = response.json()

# Parse payment requirements from 402 response

amount = payment_details["amount"] # e.g. "0.001"

currency = payment_details["currency"] # "USDC"

network = payment_details["network"] # "base"

address = payment_details["address"] # ScrapeBadger's wallet

print(f"Payment required: {amount} {currency} on {network}")

# Sign and submit micropayment

payment_signature = await self.wallet.sign_transfer(

amount=amount,

currency=currency,

network=network,

to_address=address,

)

# Retry with payment proof in header

response = await client.get(

f"{self.base_url}/v1/scrape",

params={"url": url, "render_js": render_js},

headers={"X-Payment": json.dumps({

"signature": payment_signature,

"amount": amount,

"currency": currency,

"network": network,

})}

)

response.raise_for_status()

return response.json()

# Agent usage — no API key, no subscription, no human setup

wallet = CoinbaseWallet.from_seed(agent_wallet_seed)

scraper = X402ScrapeBadgerClient(wallet=wallet)

# Agent makes scraping call autonomously — pays per request

product_data = await scraper.scrape(

"https://www.amazon.com/dp/B09V3KXJPB",

render_js=True

)

print(product_data["html"][:500])The agent requests a resource, receives an HTTP 402 response containing payment instructions, signs a USDC micropayment authorization, and resubmits the request — all without human intervention. ScraperAPI

x402 V2: Reusable Sessions for Production Scraping Pipelines

In December 2025, x402 V2 added reusable sessions, multi-chain support, and automatic service discovery — features designed for the high-frequency, multi-step workflows that agents require. Oxylabs

The reusable session feature is particularly important for scraping. Rather than signing a micropayment on every single request — which would make high-volume scraping expensive in transaction fees — x402 V2 allows a client to pre-authorize a session budget:

python

class X402ScrapeBadgerSession:

"""

x402 V2 session-based scraping — authorize a budget once,

then make multiple requests within the session.

Ideal for large batch scraping jobs.

"""

def __init__(self, wallet: CoinbaseWallet, session_budget_usdc: float = 5.0):

self.wallet = wallet

self.session_budget = session_budget_usdc

self.session_token = None

self.requests_made = 0

async def initialize_session(self):

"""

Pre-authorize a scraping session with a budget.

One payment for many requests — more efficient than per-request signing.

"""

async with httpx.AsyncClient() as client:

# Request session initialization

response = await client.post(

"https://api.scrapebadger.com/v1/session/x402",

json={"budget_usdc": self.session_budget},

)

if response.status_code == 402:

payment_details = response.json()

# Sign the session authorization

signature = await self.wallet.sign_session_authorization(

amount=str(self.session_budget),

currency="USDC",

network="base",

address=payment_details["address"],

session_id=payment_details["session_id"],

)

# Activate session

session_response = await client.post(

"https://api.scrapebadger.com/v1/session/x402/activate",

json={

"session_id": payment_details["session_id"],

"signature": signature,

}

)

self.session_token = session_response.json()["session_token"]

print(f"Session authorized: ${self.session_budget} USDC budget")

async def scrape(self, url: str, **kwargs) -> dict:

"""Make a scraping call within the authorized session."""

if not self.session_token:

await self.initialize_session()

async with httpx.AsyncClient() as client:

response = await client.get(

"https://api.scrapebadger.com/v1/scrape",

params={"url": url, **kwargs},

headers={"X-Session-Token": self.session_token}

)

self.requests_made += 1

return response.json()

# Batch scraping job — one payment authorization for the whole run

wallet = CoinbaseWallet.from_seed(agent_seed)

session = X402ScrapeBadgerSession(wallet=wallet, session_budget_usdc=10.0)

urls = [

"https://competitor.com/products/item-1",

"https://competitor.com/products/item-2",

# ... hundreds more

]

results = []

for url in urls:

data = await session.scrape(url, render_js=True)

results.append(data)

print(f"Scraped {session.requests_made} pages, spent ~${session.requests_made * 0.001:.3f} USDC")What This Means for AI Agent Developers

If you're building AI agents today — whether on Claude, GPT-4o, Gemini, or open models — and those agents need live web data, the friction point is usually the infrastructure setup. The agent itself can reason, plan, and execute, but it can't provision a ScrapeBadger account, enter billing details, or manage API key rotation. That part still requires a human.

x402 removes that requirement entirely. An agent with a funded wallet and x402 support can discover ScrapeBadger's endpoint, understand the per-request pricing, pay for what it needs, and consume the data — without a human in the loop at any stage.

Erik Reppel predicts "2026 will be the year of agentic payments, where AI systems programmatically buy services like compute and data. Most people will not even know they are using crypto. They will see an AI balance go down five dollars, and the payment settles instantly with stablecoins behind the scenes." Actowiz Solutions

This is the model that makes sense for web data specifically. A research agent's monthly scraping bill might be $0.47 one month and $34 the next, depending on what it's investigating. Pre-buying credits doesn't fit that usage pattern. x402 pay-as-you-go does.

The ScrapeBadger MCP integration already handles the tool-call side of this — any MCP-compatible agent can call ScrapeBadger endpoints for live web data today. x402 adds the payment primitive that makes this fully autonomous: the agent discovers the service, evaluates whether the cost is within its budget policy, pays, and uses the data without ever needing a pre-configured API key.

x402 + Every ScrapeBadger Endpoint

x402 support would apply across the full ScrapeBadger product range — not just general web scraping, but every specialised endpoint:

Google SERP and Search endpoints — an agent monitoring keyword rankings or researching competitive intelligence pays per SERP query in USDC. The Google Scraper product suite covering 18 Google products would each become independently discoverable and payable resources.

Google Maps — agents doing local business research or reputation monitoring pay per place search or review batch. The Maps scraping infrastructure returns review text, ratings, and place details at per-request cost.

Google Flights — travel agents and price monitoring tools pay per flight search in USDC. The Google Flights API returns real-time fares, carbon emissions, and booking tokens per query.

Google Trends — market research agents pay per Trends query. The Trends API returns interest over time and rising queries that feed content strategy decisions.

Anti-bot protected targets — the Cloudflare bypass, Akamai bypass, and other anti-bot infrastructure sit behind x402 endpoints at appropriate per-request pricing that reflects the infrastructure cost of the bypass.

In an x402-native world, every ScrapeBadger endpoint becomes a discoverable, payable resource — and an agent with a wallet and a task can assemble its own data pipeline by paying for exactly the services it needs, at the moment it needs them.

The Bigger Picture: Scraping APIs as x402 Infrastructure

x402 is an HTTP-native, internet-native payment protocol enabling autonomous agents and APIs to execute micropayments per request, without human intervention or account setup. The core unlock is not cost optimization. It is making payments as composable as the APIs they unlock.

The web scraping layer is the data acquisition primitive of the agentic economy. Agents that can reason, plan, and act — but have no way to access live information from the web — are fundamentally limited. Financial services organisations have invested significantly in AI, deploying agents that can analyse market data, assess credit risk, monitor compliance, and generate insights at a speed and scale no human team can match. All of those use cases require current data, and most of that current data lives on web pages that require scraping infrastructure to access programmatically.

x402 is the payment rail. ScrapeBadger is the data infrastructure. The combination — agent requests data, pays per request in USDC, gets structured JSON back — is the architecture that makes fully autonomous data pipelines possible without any human provisioning step.

If you're building on x402 and need web data infrastructure, or if you're building AI agents and want to eliminate the API key provisioning step from your data layer, reach out to the ScrapeBadger team. The x402 integration is on the roadmap and we want to build it with the teams who need it most.

Frequently Asked Questions

What is x402? x402 is an open payment protocol that revives the HTTP 402 "Payment Required" status code to enable instant stablecoin micropayments between clients and servers — including AI agents and APIs — without accounts, API keys, or human intervention. Zyte

Why is x402 a good fit for scraping APIs? Scraping consumption is per-request and often unpredictable in volume — exactly the use case x402 was designed for. An agent can pay for exactly the data it needs, in the moment it needs it, without pre-funding credits or managing subscriptions.

What currencies does x402 support? x402 supports USDC and USDT as primary stablecoin options, running on fast, low-cost blockchains including Base and Solana. x402 V2 added multi-chain support across additional networks. Zyte

What's the typical fee per request under x402? x402 enables micropayments as low as $0.001 per transaction with sub-second settlement times and near-zero costs. For a scraping API, the per-request fee would reflect the infrastructure cost of the call — proxy routing, anti-bot bypass, JavaScript rendering — priced transparently before the agent pays.

Is x402 production-ready in 2026? Since x402 launched in May 2025, it has processed millions of payments. The x402 Foundation now includes Google and Visa. AWS launched Amazon Bedrock AgentCore Payments in May 2026 with native x402 support. The protocol is no longer experimental.

When will ScrapeBadger support x402? We're actively evaluating x402 integration and want to build it with the teams who need it. Register your interest at scrapebadger.com and we'll reach out as the integration moves forward.

Written by

Domas Sakavickas

Domas Sakavickas is the Co-founder of ScrapeBadger, building web scraping infrastructure for developers and data teams. He writes about the web data market, tool comparisons, business use cases for scraping, and what it takes to turn public web data into a competitive advantage.

Ready to get started?

Join thousands of developers using ScrapeBadger for their data needs.