How to Export Twitter Data to Google Sheets Automatically

Most Twitter data workflows end the same way: someone exports a CSV manually, pastes it into a spreadsheet, and spends twenty minutes cleaning up the columns. Then they do it again next week. And the week after that.

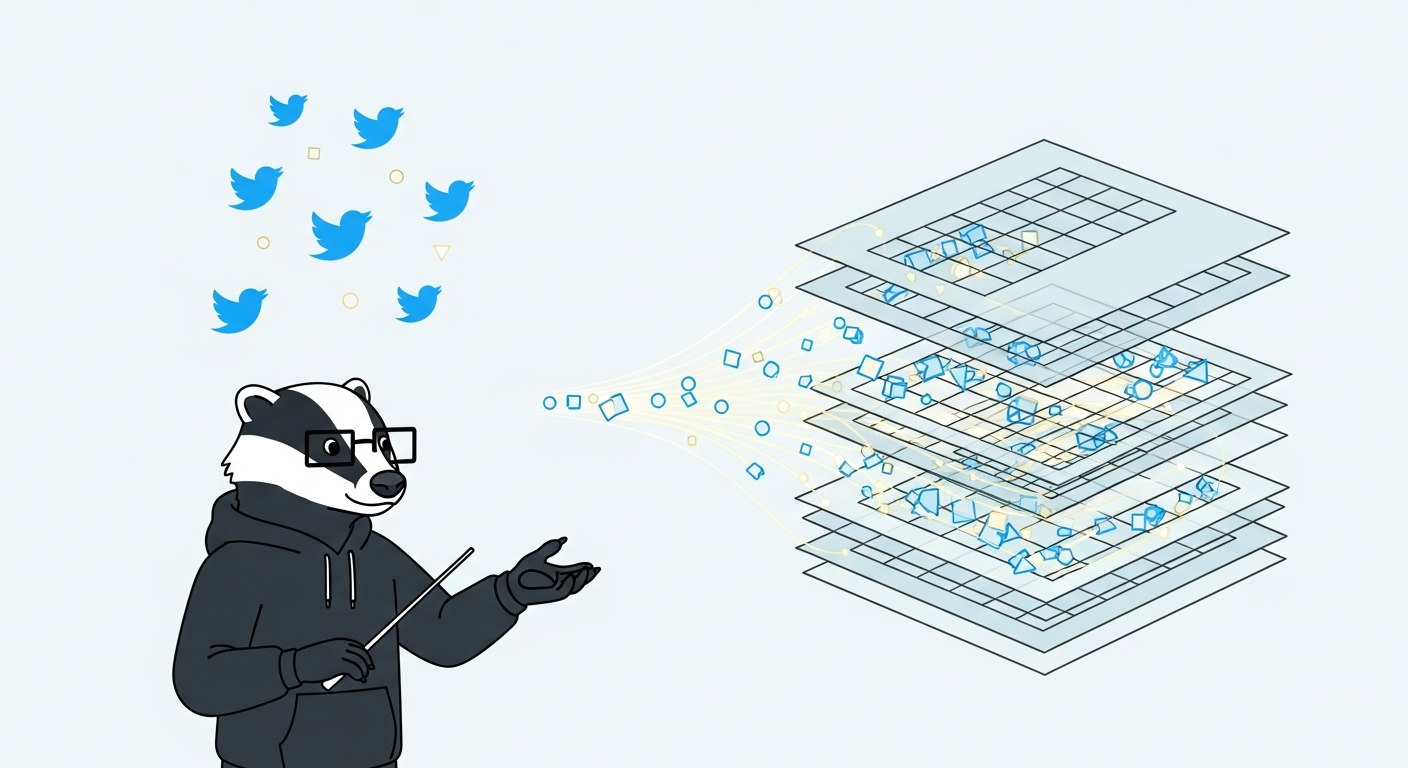

If you're tracking keywords, monitoring mentions, or pulling tweet metrics for reporting, there's no reason to do this by hand. A properly set up pipeline exports Twitter data to Google Sheets automatically, on a schedule, without you touching it. This guide covers four practical approaches — from zero-code to fully custom — so you can pick the one that matches your situation.

Why Google Sheets Is a Reasonable Target

Google Sheets isn't a data warehouse, and you shouldn't treat it like one. But for teams that want to monitor trends, share reports with non-technical stakeholders, or feed data into a simple dashboard, it's a practical and accessible destination.

The main limitations to know upfront: Sheets handles up to around 10 million cells per spreadsheet, and it starts to slow down noticeably well before that. The Twitter API returns a maximum of 100 records per request, so collecting large volumes requires pagination. And if you're pulling data frequently across multiple keywords, you'll burn through API credits faster than you expect.

For use cases like daily keyword monitoring, weekly brand report exports, or ad-hoc research pulls, Sheets works well. For high-volume pipelines processing thousands of tweets per hour, you want a database, not a spreadsheet.

Four Approaches Worth Knowing About

Option 1: IFTTT or Make (No Code)

The fastest setup is a no-code automation. IFTTT and Make (formerly Integromat) both offer Twitter-to-Sheets connectors that can be configured in under 10 minutes. You define a trigger (a new tweet matching a keyword, a new post from a specific account) and an action (append a row to a spreadsheet).

The trade-off is real: these platforms give you limited control over what data gets written, the column structure is mostly fixed, and pagination is handled (or not handled) by the platform itself. IFTTT in particular runs imports when you open the spreadsheet rather than on a true schedule, which isn't the same thing as automated.

Good for: casual monitoring, non-technical teams, simple one-keyword use cases.

Not good for: multiple keywords, structured schemas, anything you need to rely on long-term.

Option 2: Google Sheets Add-ons (API Connector, Apipheny)

API Connector and Apipheny are Google Sheets add-ons that let you connect directly to an API endpoint and import the response into a sheet. You configure the URL, headers, and parameters inside the add-on, then schedule it to run at intervals.

The setup takes 15–30 minutes and requires you to have an API key from your data source. You can use Apipheny to call the ScrapeBadger API directly — paste the endpoint URL, add your x-api-key header, configure query parameters (keyword, limit, cursor), and point it at your target sheet.

One practical note on credentials: these add-ons use query parameters by default. That means your API key can end up in the URL, which may appear in server logs or browser history. If you're using the query parameter method:

https://scrapebadger.com/v1/twitter/tweets/advanced_search?query=yourkeyword&api_key=sb_live_xxxx

It works, but use it with awareness. For production setups, Google Apps Script (covered below) gives you header-based authentication instead.

Good for: developers who want API access without writing code, moderate complexity, scheduled imports.

Not good for: high-frequency pulls, multi-step pipelines, anything requiring deduplication logic.

Option 3: n8n Workflow (Low-Code)

n8n sits between no-code and custom code. You build a workflow visually, but you have access to real logic: conditional branches, data transformation, pagination loops, and webhook triggers.

A working Twitter-to-Sheets workflow in n8n looks like this:

Schedule Trigger → HTTP Request (ScrapeBadger) → Split Out → Google Sheets (Append Row)

The HTTP Request node calls the ScrapeBadger advanced search endpoint:

GET https://scrapebadger.com/v1/twitter/tweets/advanced_search

You add your x-api-key as a header credential, set your query parameters (keyword, result type), and wire the output into a Split Out node to turn the response array into individual items. Each item then writes a row to your sheet.

This approach gives you proper scheduling, real pagination control, and the ability to add a deduplication step using a local data store or by checking existing sheet IDs. If you want a deeper walkthrough of the n8n integration specifically, the n8n + ScrapeBadger guide covers the full setup.

Good for: teams that want automation without writing Python, moderate to complex workflows, self-hosted setups.

Option 4: Google Apps Script (Fully Custom)

Apps Script is JavaScript that runs inside Google's infrastructure. You write a function that calls an API, parses the response, and writes rows to a sheet — then attach it to a time-based trigger so it runs automatically. This is the most flexible approach and the only one that gives you full control over schema, deduplication, error handling, and logging.

Here's a minimal working example that pulls tweets for a keyword and appends them to a sheet:

function fetchTweets() {

var apiKey = "sb_live_xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx"; // Store in Script Properties instead

var keyword = "your keyword here";

var url = "https://scrapebadger.com/v1/twitter/tweets/advanced_search?query=" + encodeURIComponent(keyword) + "&type=Latest";

var options = {

method: "GET",

headers: {

"x-api-key": apiKey

},

muteHttpExceptions: true

};

var response = UrlFetchApp.fetch(url, options);

var statusCode = response.getResponseCode();

// Handle error states before parsing

if (statusCode === 401) {

Logger.log("Unauthorized: check your API key");

return;

}

if (statusCode === 402) {

Logger.log("Out of credits");

return;

}

var data = JSON.parse(response.getContentText());

var tweets = data.data || [];

var sheet = SpreadsheetApp.getActiveSpreadsheet().getSheetByName("Tweets");

// Write headers if sheet is empty

if (sheet.getLastRow() === 0) {

sheet.appendRow(["tweet_id", "username", "text", "created_at", "likes", "retweets", "replies"]);

}

// Collect existing tweet IDs to deduplicate

var existingIds = sheet.getRange(2, 1, Math.max(sheet.getLastRow() - 1, 1), 1).getValues().flat();

tweets.forEach(function(tweet) {

var tweetId = String(tweet.id || "");

if (!tweetId || existingIds.indexOf(tweetId) !== -1) return; // Skip duplicates

var user = tweet.user || {};

var metrics = tweet.public_metrics || {};

sheet.appendRow([

tweetId,

user.username || "",

tweet.text || "",

tweet.created_at || "",

metrics.like_count || 0,

metrics.retweet_count || 0,

metrics.reply_count || 0

]);

});

Logger.log("Done. Wrote " + tweets.length + " tweets.");

}

A few things worth noting in this script:

Don't hardcode the API key in the script body. Use Script Properties instead:

PropertiesService.getScriptProperties().getProperty("SB_API_KEY"). This keeps the key out of source control and shared script histories.The deduplication check reads existing tweet IDs from column A before writing. For large sheets, this gets slow. If you're running this daily, keep a separate "seen IDs" tab with a smaller, rolling window instead.

Error handling covers the three most likely states from the ScrapeBadger API:

401(bad key),402(out of credits),403(key disabled). Log these explicitly so you know why the script stopped.

To schedule it: open the Apps Script editor → Triggers → Add Trigger → fetchTweets → Time-driven → every hour (or whatever interval makes sense).

Good for: developers who want full control, complex schemas, deduplication, error alerting.

Choosing the Right Approach

Method | Setup Time | Technical Skill | Deduplication | Scheduling | Best For |

|---|---|---|---|---|---|

IFTTT / Make | Under 10 minutes | None | Limited | Approximate | Casual monitoring, quick starts |

Sheets Add-ons (Apipheny) | 15–30 minutes | Low | None built-in | Yes | API access without code |

n8n workflow | 30–60 minutes | Low–Medium | With data store | Yes | Visual pipelines, self-hosted |

Google Apps Script | 1–2 hours | Medium | Full control | Yes | Production, custom schemas |

What Data to Expect in Your Sheet

A well-structured export gives you one row per tweet with consistent columns. At minimum, these fields cover most reporting needs:

Column | Source Field | Notes |

|---|---|---|

|

| Use as primary key for deduplication |

|

| ISO 8601 timestamp |

|

| Nested field — extract explicitly |

|

| Full tweet text |

|

| May be 0, not null |

|

| Same |

|

| Same |

Treat your column headers as a contract. Once downstream reports or formulas depend on them, renaming columns breaks things quietly. Define the schema once and stick to it.

Practical Gotchas Before You Ship

Tweet IDs are too large for Sheets to handle as numbers. Google Sheets uses 64-bit floats, which only reliably represent integers up to 15 digits. Twitter IDs are 18–19 digits. Always store tweet IDs as strings (wrap them in String() in Apps Script, or prefix with ' in the sheet).

Pagination isn't free. The ScrapeBadger API handles cursor-based pagination internally through the SDK, but if you're calling the REST endpoint directly from Apps Script, you'll need to handle cursor tokens manually. Start with a bounded max_items per run to keep things predictable.

Scheduled runs can fail silently. A cron job or Apps Script trigger that errors out won't notify you unless you build alerting in. Add a simple MailApp.sendEmail() call in your catch block, or check the Apps Script execution logs weekly.

For anyone building a more complete pipeline — with sentiment analysis, trend tracking, or branching alert logic — how to build a Twitter alert system for your startup covers those downstream layers in more detail.

FAQ

Can I export Twitter data to Google Sheets without coding?

Yes. IFTTT and Make both offer no-code Twitter-to-Sheets connectors. Google Sheets add-ons like Apipheny let you configure API calls inside the sheet without writing code. These are good starting points, though they have less control over schema and deduplication compared to scripted approaches.

How do I avoid duplicate tweets when running exports on a schedule?

Use tweet ID as your primary key. In Apps Script, read existing IDs from the sheet before each run and skip any tweets already present. For high-frequency runs, maintain a dedicated "seen IDs" tab so the deduplication check stays fast. The tweet ID uniquely identifies every tweet, so this approach is reliable.

Why does my tweet ID look wrong in Google Sheets?

Twitter/X tweet IDs are 18–19 digit integers. Google Sheets stores numbers as 64-bit floats, which lose precision beyond 15 digits. The last few digits get rounded to zero, making the ID useless as a key. Fix this by storing IDs as strings — prefix with an apostrophe in the cell, or force string type in your script before writing.

How often should I schedule the export?

It depends on what you're monitoring. For brand mention alerts where timeliness matters, hourly is reasonable. For weekly trend reports or keyword analysis, daily is usually sufficient. Running more frequently than you need wastes API credits and creates noise. Match the frequency to how often the data actually informs a decision.

Is the official Twitter API required, or can I use ScrapeBadger directly?

You can use ScrapeBadger directly. The ScrapeBadger API provides structured Twitter data through standard REST endpoints — no Twitter developer account required. You authenticate with an x-api-key header and call endpoints like /v1/twitter/tweets/advanced_search from Apps Script or any HTTP client. This avoids the cost and complexity of managing Twitter API credentials separately.

What happens if my API key runs out of credits mid-run?

The ScrapeBadger API returns a 402 Payment Required status code when credits are exhausted. In your Apps Script, check the response code before parsing the body and log or email the failure. You can also create a dedicated API key per automation project from the dashboard and track per-key usage so you catch credit depletion before it stops your pipeline.

Can I pull data from multiple keywords into the same sheet?

Yes. The cleanest approach is to add a keyword column to your schema and run a separate API call per keyword per schedule. Each call writes rows tagged with its keyword. This lets you filter by keyword in Sheets without maintaining separate tabs — and it keeps the deduplication logic simple since tweet IDs remain the unique key regardless of which keyword triggered the collection.

Written by

Thomas Shultz

Thomas Shultz is the Head of Data at ScrapeBadger, working on public web data, scraping infrastructure, and data reliability. He writes about real-world scraping, data pipelines, and turning unstructured web data into usable signals.

Ready to get started?

Join thousands of developers using ScrapeBadger for their data needs.